It is an exciting time to be a learning professional. The digital landscape is transforming the way we learn and there is renewed focus on the need for continual learning and upskilling. While we don’t hear as much about measurement and reporting, there are exciting and significant developments occurring in these areas as well.

I believe six areas in particular will transform our profession in the coming decade. Here’s how.

Standardization and Reporting

There are two very significant developments here, each of which will change the way human capital metrics are reported. First, the International Organization for Standardization published the first-ever “Human Capital Reporting Standards” in December 2018. This standard represents the work of a large, international group of experts who spent three years deciding which human capital metrics (measures) should be collected and reported. They started with hundreds of suggested metrics but in the end narrowed the list to 60, which includes 23 for voluntary public reporting by large organizations and 10 for voluntary public reporting by small/medium-sized organizations. The rest of the measures are for internal reporting.

The 10 measures recommended for public reporting by all organizations include: percentage of employees who have completed training on compliance and ethics; development and training cost; total workforce cost; human capital ROI and revenue or profit per employee; turnover rate; number of accidents and number killed during work; number of employees and full-time equivalents.

Other measures recommended for disclosure by large organizations include leadership trust, time to fill vacant positions, percentage of positions filled internally and diversity of the leadership team.

Although the ISO recommendations are voluntary, some countries will enact them into law, which will force multinational companies to decide whether to report the same for all countries. Moreover, even where not mandated by law, pressure is likely to build on organizations to publicly disclose. In the future, why would any employee go to work for an organization that refuses to disclose its human capital metrics?

The second significant development in this area is closely related to the first: The United States Securities and Exchange Commission announced on Aug. 8, 2019, that it has proposed new rules governing disclosure for publicly traded companies. Previously, a company that sold stocks, bonds or derivatives had to discuss 12 items in its financial disclosure, like product or service, competitive environment, order backlog, etc. The only item related to human capital was number of employees. The SEC recognizes in this rule that human capital today plays as large a role in company profitability as physical capital and must be more broadly disclosed. Furthermore, the SEC recognizes that prescriptive guidance to disclose 12 items is unlikely to keep up with the pace of change, so it proposes that all material information must be shared.

Assuming this rule is finalized in early 2020 (effective date is unknown but likely to be in fiscal year 2021 or 2022), how will companies decide what human capital metrics to share to avoid the risk of a shareholder lawsuit for failure to disclose materially important human capital information? Many believe that companies will look to the ISO standards on human capital reporting by large organizations. In this case, even though the ISO standards are not mandated by Congress, companies are likely to adopt something similar. And it won’t stop with publicly traded for-profit companies. Once publicly traded companies begin to report human capital metrics, others will be forced to follow if they wish to attract and retain the best employees.

These two developments have the potential to radically change human capital reporting forever by introducing a level of transparency that is long overdue.

Analytics and the Use of Microdata

As organizations gather and store more data at an employee level, analytics can be used to identify relationships among different measures. For example, organizations are testing the data to see what is associated (or correlated) with employee retention and employee engagement. Do employees who take more learning have higher engagement and retention rates? Or does it depend on the type of learning they take? If so, what types of learning lead to higher engagement and retention?

Second, organizations are analyzing the increasing amount of data on content that is available when courses are created in xAPI or other platforms, which allow the capture of user information on measures like time spent on a particular task. This allows learning designers and managers to “look inside” a course and see how long users are spending on components and perhaps how they are performing or reacting. This in turn is invaluable for redesigning the course for greater learning engagement or following up with specific employees about their experience. Plus, the data are available in real time.

Third, programs and computing power are facilitating the use of microdata, which simply means that data are available at the individual level for further analysis or for follow-up with the employee. This is in contrast to macrodata, which has historically been used at an aggregate level to describe an entire group of learners, perhaps in terms of their average score. So, while data have always been collected from individual employees, the data have traditionally been described and used at a higher group level, often as totals or averages for the group, with no drill down to employee.

All three of these trends will continue to grow in importance for our field, enabling the L&D professional to better manage and customize employees’ learning.

Application, Impact and ROI

For years, surveys of learning professionals have shown plans to significantly increase higher-level measurement (level 3 application, level 4 impact and level 5 ROI). While the number measuring at these levels may have increased slightly, there does not appear to have been a significant increase — but we may be about to see one. L&D professionals have always aspired to measure at a higher level than participant reaction (level 1) and learning (level 2), but it was difficult. Today, survey tools like SurveyMonkey make data collection easier than ever before, so there really is no excuse for not collecting application and impact data. Just add a question on intent to apply and a question on estimate of impact to your post-event survey where the level 1 questions are asked.

Ken Phillips, founder and CEO of Phillips Associates, (not related to the ROI Institute’s Patti or Jack Phillips) is doing some exciting work using microdata to reduce the scrap rate and increase application. He uses answers to select post-event survey questions to predict which participants are most likely to apply the learning and which factors are most important at the individual employee level for application. This in turn allows learning professionals to take targeted corrective action with either the employee or their supervisor to address the issues and realize higher application.

The bottom line is we now have the tools to easily collect and act on level 3 and level 4 data, even at the individual employee level. We should use these tools to drive application rates and impact higher, leading to better ROI.

Optimization

Some interesting work is currently being done on the optimization of learning. Kent Barnett, CEO of Performitiv, pulled together a group of 35 thought leaders in April 2019 to form the Talent Development Optimization Council. Inspired by Six Sigma and Lean, Barnett said, “Our primary goal is to develop a continuous improvement measurement process designed specifically for L&D that enables organizations to optimize the impact of their learning programs.” In other words, don’t just stop with level 4 impact and level 5 ROI. Even if these results are good, what could you do to make them better, perhaps by using microdata? The council hopes to recommend ways to measure and improve results, impact and value, including a cost-benefit financial model.

Optimization is the next logical step for those who are already measuring at levels 4 and 5.

AI and Machine Learning

Artificial intelligence is now being used to analyze courses completed by an employee to recommend additional courses for that employee or similarly situated employees. Moreover, when combined with data on the career progression of employees, AI can recommend courses that are correlated with career advancement.

In addition, machine learning is now being applied to the analysis of open-ended questions in the post-event or follow-up surveys to summarize the results. For example, it can organize similar responses and produce a frequency distribution, just like a person would if they were analyzing the results. This means that we can employ open-ended questions at scale and harness valuable insights that otherwise would have been missed.

The Use of Measures to Manage

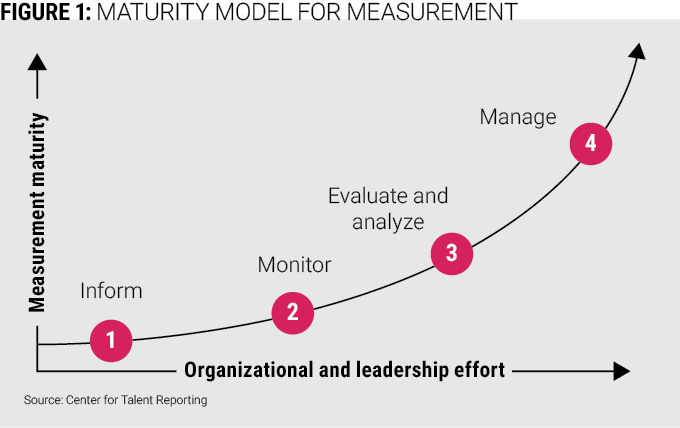

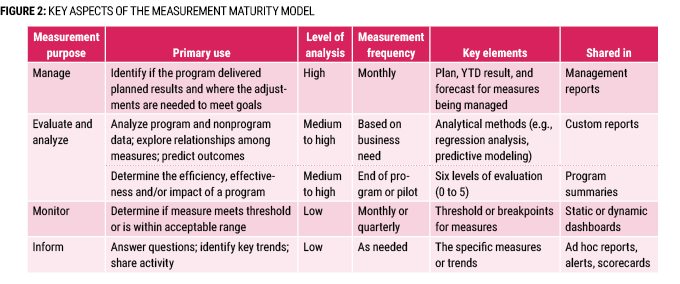

Most maturity models today have predictive analytics as the highest-level use of measurement, but I would suggest there is actually a higher level, which is management. In Figure 1 below, we have measurement to inform (answer questions, identify trends) as the foundational reason to measure. This is where practitioners spend most of their measurement effort, producing scorecards or dashboards with just historical data. While this is important, we would all agree that the profession needs to move beyond scorecards.

The next level up is to use measures to monitor. Often a measure has been performing in an acceptable range (like a level 1 score of 90 percent favorable or better) and we just want to ensure that it stays in the acceptable range. This is called monitoring and the resulting dashboard is often color-coded, showing in red the measures that are out of compliance. Monitoring requires the setting of thresholds, so it takes more work than simply reporting results.

The third level encompasses traditional program evaluation where the five levels are used to determine if the program was effective. Another activity at this level of maturity is the higher-level analytics discussed earlier. In this case, statistics like correlation and regression are used to explore the relationships between measures (like amount of learning and employee engagement). When a good statistical relationship is found, that relationship can be used to predict the value of one measure (like engagement or retention) based on the value of another measure (like amount or type of learning). This is predictive analytics and an exciting new area for L&D and HR in general. Like determining isolated impact for level 4 in a program evaluation, predictive analytics requires a much higher level of analysis than that required for monitoring.

There is, however, a higher-level purpose for measurement that has even greater potential for L&D than predictive analytics. This is the active management of programs and initiatives to deliver planned values. This requires significant analysis to create a plan for the targeted measure and significant analysis during the year (or life of the program) to understand why the measure is not on plan and what steps can be taken to get back on plan before the year ends. This is the hard work of management. Figure 2 below lays out the different aspects of each maturity level, including the analysis and reporting used.

The most complex application of measuring to manage can be found in the planning and execution of a program in support of an important organizational goal, like increasing sales or improving quality. In this case, L&D professionals must work closely with the goal owner (like the head of sales) to confirm that learning has a role to play in helping achieve the goal, agree on the program and the planned impact of learning on the organization goal (like 2 percent higher sales due to learning), and agree on the planned values for all the key efficiency and effectiveness measures required to deliver that impact (like number of participants, timing and application rate). Once the year is underway, it is unlikely everything will go according to plan, so both parties will have to actively manage the key measures to come as close to plan as possible.

In my opinion, data-driven management represents the highest level of maturity in an organization and the greatest opportunity for L&D in the measurement and reporting space. More than any of the other exciting trends discussed so far, this has the potential to deliver the greatest incremental impact for our efforts and to make us the valued, strategic business partners we aspire to become.

What Lies Ahead

We are a young profession with a lot of work ahead of us, which means that each of us can play a role in shaping our future. So experiment, question and build on the work of others to advance our thinking and increase the impact we can have on individuals and organizations.