Imagine you are an executive education provider at a prestigious business school. You have some of the most respected faculty in the world, with great content, innovative design, and participants from some of the most admired and respected organizations attending your programs regularly. The feedback from your reaction questionnaires is awesome. The participants love your material and the experience you provide. Clearly, this program is successful — right?

Now, let’s suppose your participants leave this program and never do anything with what they’ve learned. With no application of content, there is no impact on their work, their community or their families. If this occurred — no application and no impact — would you still consider your program successful?

This is a tough question. But for the executive education sponsors, those who pay for it, the clear answer is “no,” your program is not successful. As an executive education provider, you could say, “We gave them a great program and we know they learned powerful content. It is up to the participants to make it work. It’s out of our control.”

This creates a dilemma for executive education. To be successful in the eyes of those who pay for it, usually the top and senior executives, it must add value to the organization. That often means moving beyond classic behavior change measurement, usually evaluated through 360-degree feedback. The new challenge is for program providers to address the issue of application and impact up front and not wait to be pulled into these issues by the client.

The Problem

Executive education has been an important phenomenon in the past two decades. Most universities offer executive or continuing education in some format and the cost, quality and reputation has changed tremendously. It is now estimated to be a $30 billion industry in the U.S. alone. Globally, that goes well over $100 billion.

With this tremendous growth, use and cost comes a need for accountability, causing some universities and other education providers to measure the value of these services. Most important, it is causing some of the key clients who spend large sums of money on these programs to question return on investment in these programs.

According to Mihnea Moldoveanu and Das Narayandas’ “The Future of Leadership Development,” published in the March/April 2019 issue of Harvard Business Review, “Chief learning officers find that traditional programs no longer adequately prepare executives for the challenges they face today and those they will face tomorrow. Executive education programs also fall short of their own stated objectives. Traditional executive education is simply too episodic, exclusive and expensive.”

When clients ask for the value of executive education, they normally ask after the program has been completed. That’s probably too late to show the value. If you want to drive business value, you must start with business value. It is rare for executive education programs to start with the business need. But they could, and, with some programs, they should.

The Opportunity

To meet this challenge, we will borrow design thinking principles from the innovation field. Design thinking has been in the innovation industry for more than three decades. It started with the book “Design Thinking” by Peter Rowe in 1987 and developed further through many others, including Idris Mootee’s “Design Thinking for Strategic Innovation.”

The main point of design thinking is that if you want specific results, you design for those results. This requires that you clearly define the desired result, and you have the whole team work toward achieving that result. For example, Uber wanted to create a ride service that was less expensive, faster and more convenient than the classic taxi, with a better customer experience. They accomplished this by designing for those three measures: lower cost, less time, better experience.

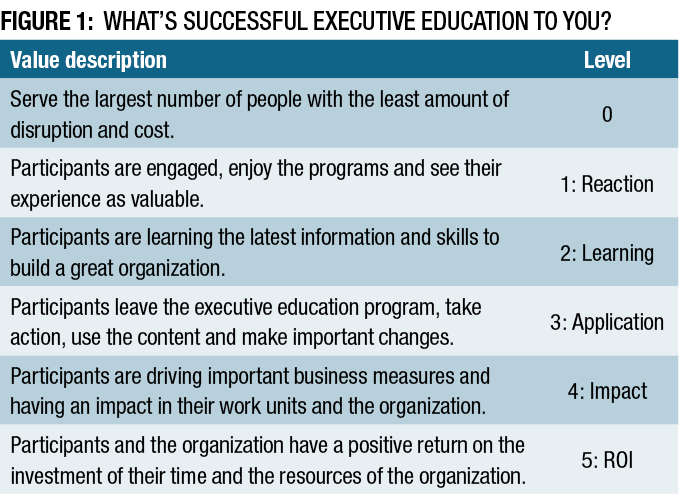

Let’s reflect on how the success of executive education is currently defined. Consider the options for defining your success of executive education shown in Figure 1. Take a few minutes to select your ultimate definition of success. Select only one value description as the most important.

If you are like the hypothetical prestigious school mentioned at the beginning of this article, you probably selected one of the first two options: serving a large number of participants from high-profile, admired organizations and having them be very pleased with their participation.

As you examine the list, there is a tendency to want to select other options, particularly toward the bottom of the table. Unfortunately, in reality, the vast majority of executive education programs are not evaluated at the impact level, and rarely are they evaluated at the application level, usually with a process similar to 360-degree feedback.

The good news is that the evaluation of executive education programs can be (and is being) done at the impact and ROI levels. It is just a matter of designing for the desired results and making sure the entire team is focusing on those results. Following is a system that can meet that challenge.

The Fundamentals

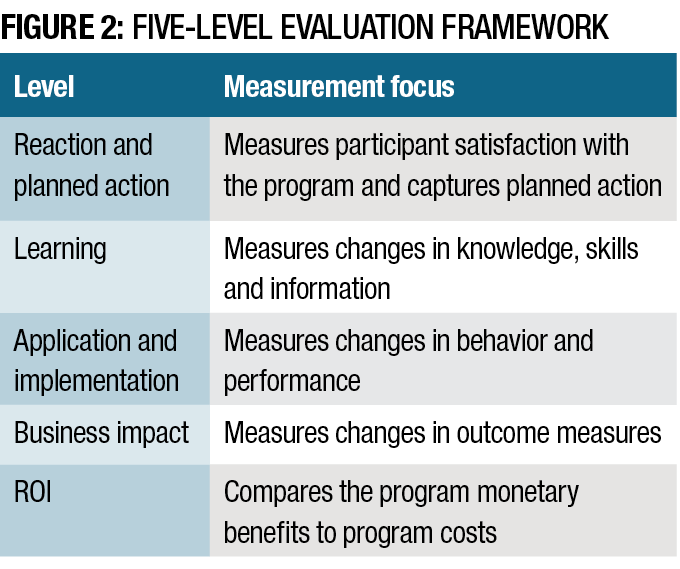

Most programs are evaluated using logical steps of value, and their sequential use can be linked to a variety of guidelines and models. At the heart of our ROI methodology is the variety of data collected throughout the process and reported at different intervals, as shown in Figure 2. This flow of data is appropriate for executive education as employees react to the programs, learn content in the program, apply what they have learned and have an impact in their work. When impact is converted to money and compared to the cost of the executive education program, the ROI may be calculated.

In addition to these five levels of outcomes, (reaction, learning, application, impact and ROI), there are some impact measures that are not easily converted to money, and they are left as intangibles. These are measures such as teamwork, collaboration, image, stress and job engagement. By balancing financial impact with measures that address individual perspectives and the systems and processes that support the transfer of knowledge, skills and information, a complete story of program success can be told.

If you can deliver business results at a reasonable cost, you can influence the funders who make decisions about executive education programs, protect the investment when it needs to be protected and enhance it when it needs to be increased. The key is to influence the investment decisions with data that connect business results to the program and add value to the organization.

Designing for Results

The definition of success of executive education programs should be business impact for organizations, the outcome desired by top executives. With the definition of success clearly defined, the challenge is to design for the desired success. Designing for the results takes the risk and mystery out of the process.

The concept is simple. At every stage in the process, the executive education program is designed with the end in mind — and the end is business results. All stakeholders complete their part of the process with the business results in mind. This transforms the classic program development cycle into a simple design thinking model for business results with eight steps to design for success.

Step 1: Start With Why

Align executive education programs with the business. The why becomes the business need and the proposed program is aligned to the specific business measure, selected by the executive before attending the program.

Step 2: Make it Feasible

Select the right solution. To make sure this is the right solution to drive the business measure, the executive is asked a simple question: “Can you use the content of this program with your team to improve this measure?” If the answer is no, another measure is selected until there is a yes.

Step 3: Expect Success

Design for results. The success of learning is now defined as, “Executives are using the learning to drive important business impact measures in their work units.” Objectives are set to push accountability to the business impact level. With reaction, learning, application and impact objectives, the designers, developers, facilitators, participants and managers of participants (if they are involved) know what they must do to deliver and support results.

Step 4: Make it Matter

Design for input, reaction and learning. This ensures that the right people are involved in executive education programs at the right time and that the content is important, meaningful and actionable. Reaction and learning data are measured.

Step 5: Make it Stick

Design for application and impact. This ensures that the executive is actually using the learning (application) and that it has an impact. Action plans are often used to guide the executive through application and impact. Results are captured from executives at both application and impact levels. Barriers must be removed or minimized and enablers are enhanced to drive results.

Step 6: Make it Credible

Measure results and calculate ROI. With impact data in hand, the results must be credible. The first action is to isolate the effects of the executive education program on the impact data. Several options are available to tackle this step. If ROI is planned, the next action is to convert data to money. Then the monetary benefits are compared to the cost of the program in an ROI calculation. This builds two sets of data that sponsors will appreciate: business impact connected directly to the program and the financial ROI, which is calculated the same way that a CFO would calculate the return on a capital investment.

Step 7: Tell the Story

Communicate results to key stakeholders. Reaction, learning, application, impact and perhaps even ROI data form the basis for a powerful story. Storytelling is critical, and it’s a much better story when you have business impact. Narratives and numbers are both needed.

Step 8. Optimize Results

Use black box thinking to increase funding. Designing for results usually drives the needed results, but there’s always an opportunity to make the results even better. This involves improving the program so the ROI increases in the future. Increased ROI makes a great case for more funds. When funders (executives) see that the program has a positive ROI, it will usually be repeated, retained and supported.

Following these eight steps provides a simple system to use design thinking to deliver business results for executive education programs. It’s not a radical change, but it involves tweaking what has been used and done in the past. The change is shifting the responsibility to drive the business results to all the stakeholders. It also redefines the success of learning, not just absorbing the skills and knowledge or even using them in your work. Education is now defined as driving impact in the organization.

When executive education programs are designed using these eight steps, we also shift the funders’ perception of executive education from a cost to an investment. With this approach, funders clearly see that executive education reaps an ROI. If the funders or executives do not see it as an investment, executive education programs may be perceived as a cost that could be cut, controlled or reduced. Unfortunately, this has occurred in many situations.

In summary, using design thinking as the evaluation method of your executive education programs leads to optimization of the ROI as programs are improved. Optimization leads to better decisions to allocate funds in the future. The challenge is to incorporate a measurement and evaluation system to show funders the value of executive education programs at the investment level up front so they are more likely to invest in your program and support the participants.

This article was originally published as “Designing for Results” in the July/August 2019 issue of Chief Learning Officer.