Square peg. Round hole. This is the problem with learning measurement.

The most well-known learning measurement models have been around for decades, and yet the industry continues to struggle with its measurement practices. We’re great with surveys. We have plenty of test scores. We know who completed the training, how long they took and what they clicked along the way. But most learning and development teams still cannot answer critical stakeholder questions: Is the training actually working? Which tactics are having the greatest impact? Why should we invest more resources into employee development?

But it’s not for lack of trying. Measurement is a bigger L&D priority now than it has ever been. Ninety-six percent of L&D leaders say they want to measure learning impact, according to LEO Learning’s “Measuring the Impact of Learning 2019.” Donald Taylor’s 2020 L&D Global Sentiment Survey includes multiple data-related entries among the list of the year’s hottest industry topics, such as learning analytics (No. 1), artificial intelligence (No. 5), consulting more deeply with the business (No. 7) and showing value (No. 9). However, less than 20 percent of companies say they are highly effective at learning measurement, according to Brandon Hall Group’s 2018 Modern Learning Measurement study.

L&D wants to fix learning measurement. Stakeholders are demanding accountability. Plenty of models are available from which L&D pros can choose. So then what’s the issue?

The Real Problem

The problem with learning measurement is that the problem doesn’t begin with learning measurement. Workplace training looked very different when popular measurement models were created. The internet didn’t exist. Training only happened in classrooms and on the job. L&D was fundamentally limited in what it could measure. Measurement models reflect this focus on place-and-time training. They have tried to adapt with the times, but there’s only so far you can take the foundational concepts. This has put L&D in a “square peg, round hole” situation.

So, L&D just needs a new measurement model that takes into account how workplace learning works now, right? Well, it’s not that simple. Thankfully, we can learn a lot about this topic from billboard advertising. Yes, the key to fixing learning measurement is billboards.

A Lesson from Marketing

L&D already borrows from marketing on topics such as content design and campaign-based delivery. It’s time for another lesson, this time on data. Modern marketing is a data-enabled function. They don’t just know that you consumed an ad. They use everything they know about you to first develop the strategy, then target advertising and ultimately determine how tactics influence your buying decisions. Marketing can measure impact.

But they didn’t always have this capability. In fact, marketing was in a place very similar to L&D 20 years ago. Before the internet, marketing leaned on mail, print, radio and television ads. Oh, and billboards. They used a limited understanding of their audience to get as many eyes onto an advertisement as possible. Then, they did their best to correlate changes in business results to marketing activities. Did they know that driving past a billboard caused you to buy a new breakfast cereal? No. But they knew sales went up after the billboards went up, so they kept doing it.

Fast forward 20 years. Marketing has not gotten any better at measuring the impact of billboards. They’re not a data-rich tactic. There’s only so much you can do to measure their impact without considerable time, effort and expense. Instead of repeatedly trying new models to measure data-poor tactics, marketing evolved how they do what they do. The internet gave birth to digital marketing. Mobile and social technology provided even more data-rich tactics. Marketing still uses billboards, but they’re a small piece of a dynamic toolset.

Fast forward 20 years. Marketing has not gotten any better at measuring the impact of billboards. They’re not a data-rich tactic. There’s only so much you can do to measure their impact without considerable time, effort and expense. Instead of repeatedly trying new models to measure data-poor tactics, marketing evolved how they do what they do. The internet gave birth to digital marketing. Mobile and social technology provided even more data-rich tactics. Marketing still uses billboards, but they’re a small piece of a dynamic toolset.

Unfortunately, many traditional L&D tactics remain data-poor. It doesn’t matter which measurement model you apply. Even with extra time and effort, there’s only so much data you can get. Before measurement can be fixed, L&D must first evolve the way it approaches its work. L&D must adopt data-rich tactics that align with how learning actually happens in the workplace.

The Principles of Good Data

The biggest consideration for improving L&D data practices is a basic question: What problem are you trying to solve? All of the data in the world won’t matter if L&D teams don’t start by clarifying the goals of the organization. What does the business need to do, and how can L&D leverage data to make it happen?

Are you trying to accelerate onboarding? Are you trying to improve key performance indicators? Do you need to enable organizationwide reskilling? All of the above?

Data can only help you if you know what questions to ask.

Next, L&D needs more than just data. You need the right data. You need data that helps you understand the needs of your employees and how their performance does (or does not) change based on your solutions. Learning doesn’t start and end with a course. It’s a personal, continuous experience. Therefore, L&D measurement must also become personal and continuous.

As you begin to think differently about measurement, consider the five key principles of good data.

- Velocity: Data must be gathered and analyzed at the right speed.

- Variety: Different types of data will be needed to tell the overall learning and performance story.

- Veracity: Data must be trustworthy and free of bias and disruptive outliers.

- Volume: The appropriate amount of data must be collected to enable the holistic measurement strategy.

- Value: Data must be selected for collection and analysis based on its ability to foster the right questions and deliver value to the business and employees.

Modern data practices are built on these five principles — the same principles applied in marketing.

Identifying Your Data

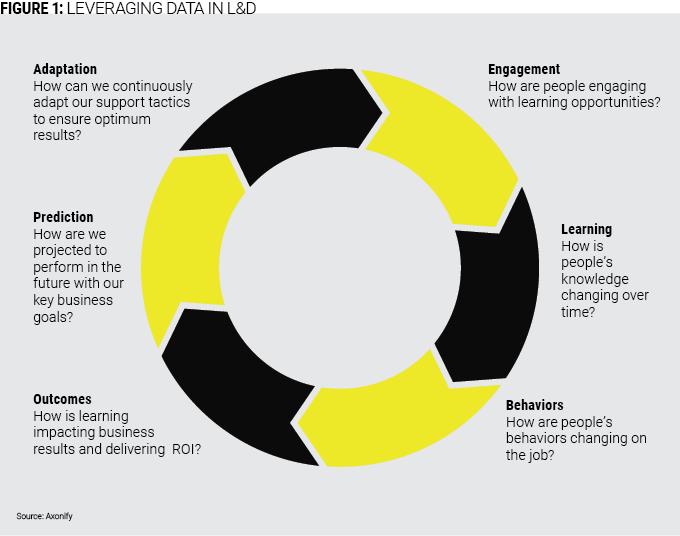

Just figuring out where to start is one of the biggest obstacles L&D pros reference when it comes to improving measurement. After you know what problem(s) you are trying to solve, you must determine the types of data you will need to power your solution(s). The specific data points will vary by organization and problem to be solved. That said, most high-value data fits within four categories.

Business data: How does the organization know there is a problem at all? Start with this question to determine the existing business metrics that will be of most value to L&D. This may include sales results, net promoter score, recordable safety incidents, first-call resolution, etc. If stakeholders are unable (or unwilling) to provide this data, L&D will be limited in its measurement capabilities.

People data: Who is L&D trying to help solve this problem? Organizations usually have a lot of employee data, including demographics, role, team structure, location, tenure and so on. This data can help you better target your solutions and understand how specific groups are (or are not) benefiting from L&D solutions.

Performance data: What is happening in real life? How does the organization determine whether employees are demonstrating the right behaviors on the job? In some cases, this data already exists. For example, in safety-critical environments, auditors often record behavior observation data to identify trends and potential risks. L&D can leverage this data to determine how their solutions are (or are not) impacting real-world employee behavior. This is critical for connecting learning to business results.

Learning data: How is employee knowledge and confidence changing? L&D must expand the definition of “learning data” to include more than test scores, smile sheets and course tracking. These data points are still needed, but L&D must be able to assess an employee’s current capability, regardless of the training they completed in the past. This will help L&D proactively design and implement right-fit, persistent solutions before performance gaps appear.

This is not a comprehensive list of the data L&D needs to improve measurement. For example, some teams are applying sentiment and network analysis to determine how people interact in the workplace and learn from one another. These categories show how much the L&D data puzzle must expand so you can get the pieces you need to put your own measurement strategy together.

Moving Data Forward

You have identified the types of data you need to solve your problem. Now, you have some work to do.

Start with existing sources. Where can you already access some of this data? HR should have the people data. Business stakeholders should have the business data and maybe some performance data. L&D has pieces of the learning data. Connect with data experts on these teams to understand what data is available and how you can access it. This should happen early in the process, before you actually need to apply the data.

Next, consider evolving your tactics. It’s time to go beyond the billboard. L&D can apply an evolved perspective on data to gather more and better data from existing tactics. If this isn’t enough to solve the problem, you can evolve and augment your tactics to become more data-rich. For example, a traditional classroom session yields minimal data beyond completions, assessment scores and survey results. However, this tactic can be enriched by adding new, meaningful data collection points before and after the session. Progressive organizations are asking participants to complete assessments that demonstrate their knowledge and confidence in key topics before the session. They are provided with ongoing reinforcement activities to measure how they retain important information long-term. Microlearning activities are also being applied to capture data on knowledge retention during the few minutes employees have available in their workday. This provides a real-time understanding of what employees do (and do not) know.

Consider new capabilities. Improving L&D data practices is not just about fixing learning measurement. Data is required to implement a growing list of modern learning practices, including:

- Personalization: adjusting a digital learning experience based on the specific needs of an individual employee.

- Adaptive learning: providing the right learning experience to the right person at the time.

- Recommendation: highlighting additional resources or experts based on proven need and value.

- Coaching: providing managers with specific, actionable steps to help an employee improve their performance.

Learning Measurement, Transformed

How do you fit a square peg into a round hole? You can’t. You need a new peg, one that is specifically designed to fit this particular hole. The same is true for learning measurement. One model cannot solve the industry’s problem. Instead, each L&D team must ask their own questions, apply proven, data-rich principles and develop their own measurement strategy.

In addition to not knowing where to begin, L&D pros often cite prioritization as a reason for measurement problems. Why should this be important to every L&D team? The answer to this question is another question: If you can’t tell if what you’re doing is working, what’s the point in doing it at all? Stakeholders expect L&D solutions to have a positive impact on their people and their business. When L&D cannot prove impact or apply data to innovate their tactics like other functions, their value comes under scrutiny. Everyone anecdotally agrees that learning is important. It’s the way we do our jobs that comes into question — and rightly so.

This may sound complicated. This may sound difficult. But doing what it takes to finally fix learning measurement is clearly worth the effort. According to a 2019 study by Axonify, companies that apply modern data practices see a 29 percent average impact on business results from their learning programs. This isn’t the result of a survey. It’s not the weak correlation that comes from old-school billboards. Real impact measurement is possible. But first, we have to shatter the way L&D thinks about measurement.